ARM’s Decadal Vision, Part 3: Data Services

Published: 22 April 2022

ARM envisions advanced data analytics, flexible computing environments, improved software tools

Editor’s note: This is the third article in a series about the four themes in ARM’s Decadal Vision document.

At the heart of the Atmospheric Radiation Measurement (ARM) user facility is its observations. They start as raw data from instruments, get refined so they are more useful to researchers, and are checked for quality. In some cases, experts use these processed data to create higher-end data products that sharpen high-resolution modeling simulations.

In the past 30 years, ARM has amassed more than 11,000 data products totaling over 3.2 petabytes of data.

That’s the capacity of about 50,000 smartphones, at 64 gigabytes per phone.

With that much data on hand, ARM is taking steps over the next decade to upgrade its field measurements, data analytics, and data-model interoperability. Upgrades and aspirations are outlined in a 31-page Decadal Vision document, written by ARM Technical Director Jim Mather.

The document also outlines plans for data services.

Big Leaps

ARM Data Services Manager Giri Prakash says that when he started at Oak Ridge National Laboratory in Tennessee in 2002, ARM had about 16 terabytes of measurements stored away.

“I looked at that as big data,” he says.

By 2010, the total was 200 terabytes. In 2016, ARM reached 1 petabyte of data.

Collecting those first 16 terabytes took nearly 10 years. Today, ARM, a U.S. Department of Energy (DOE) Office of Science user facility, collects that much data about every six days. Its data trove is growing at a rate of 1 petabyte a year.

Prakash credits this meteoric rise to more complex data, more sophisticated instruments, more high-resolution measurements (mostly from radars), more field campaigns, and more high-resolution models.

Rethinking Data Management

“ARM is special. We have an operationally robust and mature data service, which allows us to process quality data and distribute them to users.”

ARM Data Services Manager Giri Prakash

How to handle all these data?

“We had to completely rethink our data management and re-create much of it from the ground up,” says Prakash. “We need end-to-end data services competence to streamline and automate more of the data process. We refreshed almost 70 data-processing tools and workflows in the last four years.”

That effort has brought recognition. Since 2020, the ARM Data Center has been recognized as a CoreTrustSeal repository, was named a DOE Office of Science PuRe (Public Reusable Research) Data Resource, and earned membership in the World Data System.

All these important professional recognitions require a rigorous review process.

“ARM is special,” says Prakash, who represents the United States on the International Science Council’s Committee on Data (CODATA). “We have an operationally robust and mature data service, which allows us to process quality data and distribute them to users.”

An ARM Vision

ARM measurements, free to researchers worldwide, flow continuously from about 460 field instruments at six fixed and mobile observatories.

The instruments operate in climate-critical regions across the world. They record data that are important for understanding the Earth’s weather and climate. There are ARM data on clouds, precipitation, aerosols, surface energy fluxes, water vapor, and other baseline atmospheric factors.

Mather says that, as part of the Decadal Vision, increasingly complex ARM data will get a boost from emerging data management practices, hardware, and software (which are increasingly sophisticated).

Data services, “as the name suggests,” says Mather, “is in direct service to enable data analysis.”

That service includes different kinds of ARM assets, he says, including physical infrastructure, software tools, and new policies and frameworks for software development.

Meanwhile, adds Prakash, ARM employs FAIR guidelines for its data management and stewardship. FAIR stands for Findability, Accessibility, Interoperability, and Reuse.

Flexible Computing Environments

One step in ARM’s decadal makeover will be to improve its operational and research computing infrastructure. Greater computing, memory, and storage assets will make it easier to couple high-volume data sets (from scanning radars, for instance) with high-resolution models. More computing power and new software tools will also support machine learning and other techniques required by big-data science.

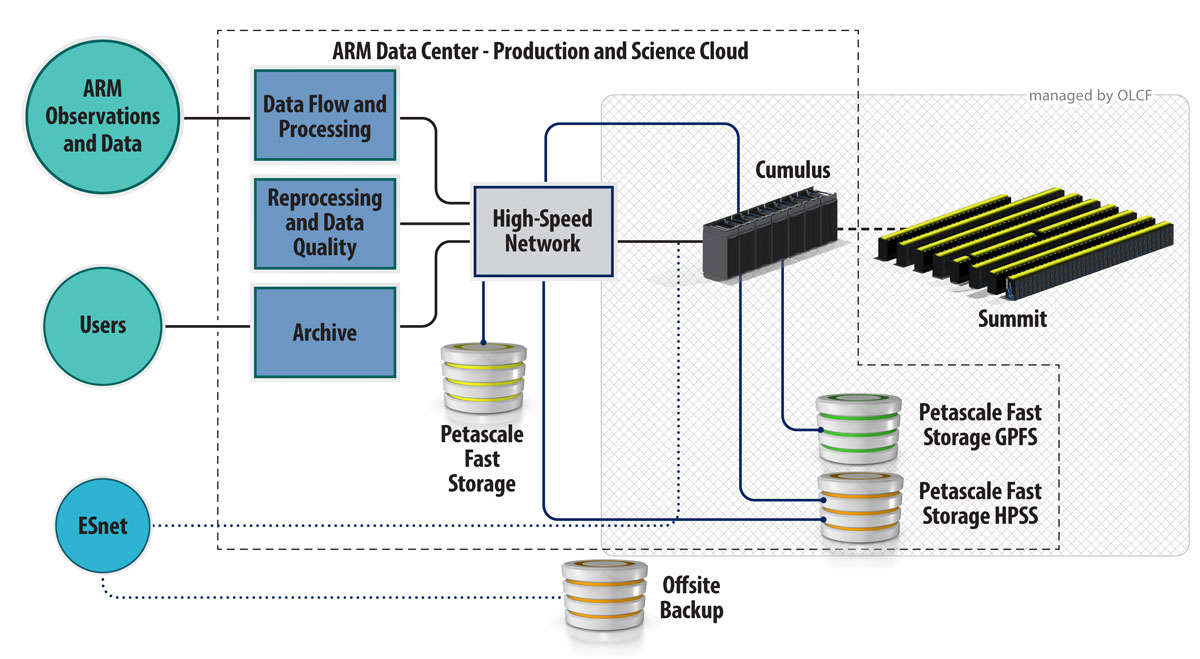

The ARM Data Center already supports the user facility’s computational and data-access needs. But the data center is being expanded to strengthen its present mix of high-performance and cloud computing resources by providing seamless access to data and computing.

Mather laid out the challenge: ARM has more than 2,500 active datastreams rolling in from its hundreds of instruments. Processing bottlenecks are possible when you add the pressure of those datastreams to the challenge of managing petabytes of information. In all, volumes like that could mean it’s harder to make science advances with ARM data.

To get around that, in the realm of computing hardware, says Mather, ARM will provide “more powerful computation services” for data processed and stored at the ARM Data Center.

‘The Need Continues to Grow’

Some of that ramped-up computing power came online in the last few years to support a new ARM modeling framework, where large-eddy simulations (LES) require a lot of computational horsepower.

So far, the LES ARM Symbiotic Simulation and Observation (LASSO) activity has created a large library of simulations informed by ARM data. These exhaustively screened and streamlined data bundles, to atmospheric researchers, are proxies of the atmosphere. For example, they make it easier to test the accuracy of climate models.

Conceived in 2015, LASSO first focused on shallow cumulus clouds. Now, data bundles are being developed for a deep-convection scenario. Some of those data will be available through a beta release as early as May 2022.

Still, “the need continues to grow” for more computing power, says Mather. “Looking ahead, we need to continually assess the magnitude and nature of the computing need.”

ARM has a new Cumulus high-performance computing cluster, which provides more than 16,000 processing cores to ARM users. The average laptop has four to six cores. (Read the April 2022 ARM article announcing the new cluster.)

As needed, ARM users can apply for more computing power at other DOE facilities, such as the National Energy Research Scientific Computing Center (NERSC). Access to external cloud computing resources is also available through DOE.

Prakash envisions a menu of user-friendly tools, including Jupyter Notebook, available to ARM users to work with ARM data. The tools are designed for users to transition from a laptop or workstation while they access petabytes of ARM data at a time.

Says Prakash: “Our aim is to provide ARM data, wherever the computer power is available.”

Software Tools

“Software tools are also critical,” says Mather. “We expect single cases of upcoming (LASSO) simulations of deep convection to be on the order of 100 terabytes each. Mining those data will require sophisticated tools to visualize, filter, and manipulate data.”

Imagine, for instance, he says, LASSO trying to visualize convective cloud fields in three dimensions. It’s a daunting software challenge.

“We expect single cases of upcoming (LASSO) simulations of deep convection to be on the order of 100 terabytes each. Mining those data will require sophisticated tools to visualize, filter, and manipulate data.”

ARM Technical Director Jim Mather

Challenges like that require more engagement than ever with the atmospheric research community to identify the right software tools.

More engagement helped shape the Decadal Vision document. To gather information for it, Mather drew from workshops and direct contact with users and staff to cull ideas on increasing ARM’s science impact.

Given the growth in data volume, there was a clear need to give a broader audience of data users even more seamless access to the ARM Data Center’s resources. They already have access to ARM data, analytics, computing resources, and databases. ARM data users can also select data by date range or conditional statements.

For deeper access, ARM is developing an ARM Data Workbench.

Prakash envisions the workbench as an extension of the current Data Discovery interface―one that will “provide transformative knowledge discovery” by offering an integrated data-computing ecosystem. It would allow users to discover data of interest using advanced data queries. Users could perform advanced data analytics by using ARM’s vast trove of data as well as software tools and computing resources.

The workbench will allow users to tap into open-source visualization and analytic tools. (Open-source code, free to anyone, can also be redistributed or modified.) They could also use technologies such as Apache Cassandra or Apache Spark for large-scale data analytics.

By early 2023, says Prakash, a preliminary version of the workbench will be online. Getting there will require more hours of consultations with ARM data users to nail down their workbench needs.

From that point on, he adds, the workbench will be “continuously developed” until the end of fiscal year 2023.

Prakash calls the workbench, with its enhanced access and open-source tools, “a revolutionary way to interact with ARM data.”

Open-Source Software Practices

ARM already uses a large number of software tools. But Mather and others “recognize an increasing need for users to develop code in an open manner,” according to the Decadal Vision document.

“It is critical we hear from the community regarding their needs as we develop software tools,” says Mather. “But we can also benefit from the software development they are already doing.”

ARM has started to leverage open-source software practices by creating libraries for reading and plotting a variety of ARM data, he says. “But there is much more we can do.”

ARM recently restructured its open-source code capabilities. It came after engagement with the ARM user community to establish best practices for code-sharing. The aim of these best practices is to develop new data products and perhaps find a way to speed the development of machine-learning applications.

In the restructuring, ARM added two more data service “organizations” to the software-sharing site GitHub. Now, there are three.

In addition to hosting open-source software, organizations can host community code. That’s a name for easy-to-use code developed for the user community. Researchers may use the code and are expected to contribute to it. There are guidelines, however, for contributing to the code.

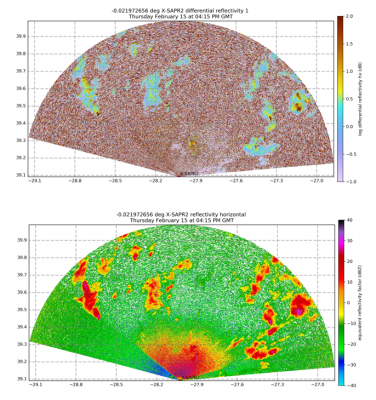

The first ARM organization on GitHub is ARM-DOE, which only hosts ARM-supported data repositories. Two of the repositories are the Python ARM Radar Toolkit (Py-ART) and Atmospheric data Community Toolkit (ACT).

Adam Theisen, ARM’s instrument operations manager, called Py-ART “ARM’s flagship open-source software package.” Py-ART contains algorithms and utilities that help users work with weather radar data.

ACT is a toolkit for exploring and analyzing atmospheric time-series data in varying dimensions.

ACT and Py-ART are also readily usable by ARM infrastructure, says Theisen, “whether it’s a retrieval, a quality-control technique, new data visualizations, or more. If a user can contribute to these open-source packages, ARM can utilize them.”

ARM’s two new GitHub organizations are ARM-Synergy and ARM-Development.

In ARM-Synergy, principal investigators working with ARM and DOE’s Atmospheric System Research (ASR) program can host both open-source software and community code.

ARM-Development is where ARM staff and others can test and experiment with software and share ideas.

Time to ‘Expand and Grow’

Theisen is frank about the challenges of the open-source world.

“It is hard for groups to change their coding practices and supported languages,” he says. “However, once the benefit of making that switch outweighs the effort, real progress can happen.”

In the last few years, ARM’s Data Quality (DQ) Office has switched its primary programming language to Python. As the DQ Office converted its “great wealth of code,” says Theisen, the office included some of it in ACT for broader community access.

The DQ Office has also been using Py-ART in this transition to Python and open-source tools.

“I see ARM’s efforts in the open-source arena continuing to expand and grow,” says Theisen.

In particular, he notes the great potential of enlarging open-source efforts to make data products.

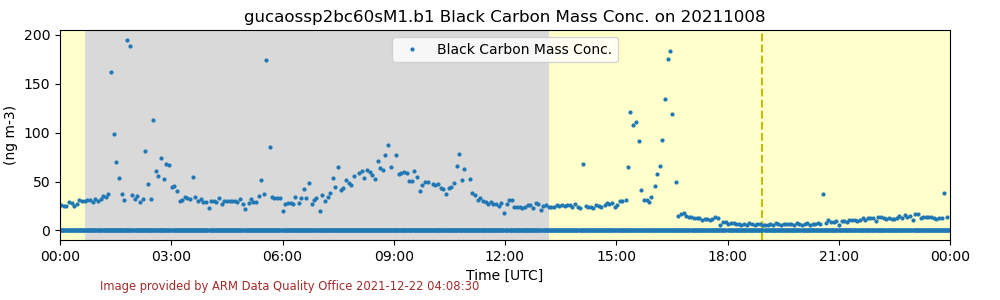

As an example, Theisen offered PySP2, hosted in ARM-DOE on GitHub. PySP2 is used for processing measurements from the latest generation of the single-particle soot photometer (SP2).

“Long story short, we are looking to expand our open-source offerings and presence at ARM.”

ARM Instrument Operations Manager Adam Theisen

Normally, such processing is manual and intensive. But PySP2 software saves time. It came out of a collaboration between SP2 instrument mentor Art Sedlacek of Brookhaven National Laboratory in New York and software developer Bobby Jackson of Argonne National Laboratory in Illinois.

The software is now used in the routine automated ingest for SP2 data. For that, Theisen credits Eddie Schuman, who is on the data ingest development team at Pacific Northwest National Laboratory in Washington state.

“Long story short, we are looking to expand our open-source offerings and presence at ARM,” says Theisen.

The ARM/ASR Open Science Virtual Workshop 2022, scheduled from May 10 to 13, will feature talks and tutorials on emerging hardware and software for managing data. (Registration is open until May 8.)

“Within ARM, we have a limited capacity to develop the processing and analysis codes that are needed,” says Mather. “But these open-source software practices offer a way for us to pool our development resources to implement the best ideas and minimize any duplication of effort.”

In the end, he adds, “this is all about enhancing the impact of ARM data.”

Keep up with the Atmospheric Observer

Updates on ARM news, events, and opportunities delivered to your inbox

ARM User Profile

ARM welcomes users from all institutions and nations. A free ARM user account is needed to access ARM data.